How the Brain Distinguishes Memories From Perceptions

Perception and memory use some of the same areas of the brain. Small but significant differences in the neural representations of memories and perceptions may enable us to distinguish which one we are experiencing at any moment.

Kristina Armitage/Quanta Magazine

Introduction

Memory and perception seem like entirely distinct experiences, and neuroscientists used to be confident that the brain produced them differently, too. But in the 1990s neuroimaging studies revealed that parts of the brain that were thought to be active only during sensory perception are also active during the recall of memories.

“It started to raise the question of whether a memory representation is actually different from a perceptual representation at all,” said Sam Ling, an associate professor of neuroscience and director of the Visual Neuroscience Lab at Boston University. Could our memory of a beautiful forest glade, for example, be just a re-creation of the neural activity that previously enabled us to see it?

“The argument has swung from being this debate over whether there’s even any involvement of sensory cortices to saying ‘Oh, wait a minute, is there any difference?’” said Christopher Baker, an investigator at the National Institute of Mental Health who runs the learning and plasticity unit. “The pendulum has swung from one side to the other, but it’s swung too far.”

Even if there is a very strong neurological similarity between memories and experiences, we know that they can’t be exactly the same. “People don’t get confused between them,” said Serra Favila, a postdoctoral scientist at Columbia University and the lead author of a recent Nature Communications study. Her team’s work has identified at least one of the ways in which memories and perceptions of images are assembled differently at the neurological level.

Blurry Spots

When we look at the world, visual information about it streams through the photoreceptors of the retina and into the visual cortex, where it is processed sequentially in different groups of neurons. Each group adds new levels of complexity to the image: Simple dots of light turn into lines and edges, then contours, then shapes, then complete scenes that embody what we’re seeing.

In the new study, the researchers focused on a feature of vision processing that’s very important in the early groups of neurons: where things are located in space. The pixels and contours making up an image need to be in the correct places or else the brain will create a shuffled, unrecognizable distortion of what we’re seeing.

The researchers trained participants to memorize the positions of four different patterns on a backdrop that resembled a dartboard. Each pattern was placed in a very specific location on the board and associated with a color at the center of the board. Each participant was tested to make sure that they had memorized this information correctly — that if they saw a green dot, for example, they knew the star shape was at the far left position. Then, as the participants perceived and remembered the locations of the patterns, the researchers recorded their brain activity.

The brain scans allowed the researchers to map out how neurons recorded where something was as well as how they later remembered it. Each neuron attends to one space, or “receptive field,” in the expanse of your vision, such as the lower left corner. A neuron is “only going to fire when you put something in that little spot,” Favila said. Neurons that are tuned to a certain spot in space tend to cluster together, making their activity easy to detect in brain scans.

Previous studies of visual perception established that neurons in the early, lower levels of processing have small receptive fields, and neurons in later, higher levels have larger ones. This makes sense because the higher-tier neurons are compiling signals from many lower-tier neurons, drawing in information across a wider patch of the visual field. But the bigger receptive field also means lower spatial precision, producing an effect like putting a large blob of ink over North America on a map to indicate New Jersey. In effect, visual processing during perception is a matter of small crisp dots evolving into larger, blurrier but more meaningful blobs.

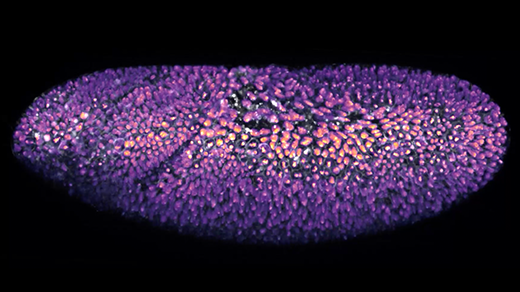

Serra Favila, a researcher at Columbia University, and her colleagues studied how the neural representations of perceptions and memories of images differ. The escalating sizes of the “receptive fields” of the neurons in the visual cortex seem to hold the key.

Courtesy of Serra Favila

But when Favila and her colleagues looked at how perceptions and memories were represented in the various areas of the visual cortex, they discovered major differences.

As participants recalled the images, the receptive fields in the highest level of visual processing were the same size they had been during perception — but the receptive fields stayed that size down through all the other levels painting the mental image. The remembered image was a large, blurry blob at every stage.

This suggests that when the memory of the image was stored, only the highest-level representation of it was kept. When the memory was experienced again, all the areas of the visual cortex were activated — but their activity was based on the less precise version as an input.

So depending on whether information is coming from the retina or from wherever memories are stored, the brain handles and processes it very differently. Some of the precision of the original perception gets lost on its way into memory, and “you can’t magically get it back,” Favila said.

A “really beautiful” aspect of this study was that the researchers could read out the information about a memory directly from the brain rather than rely on the human subject to report what they were seeing, said Adam Steel, a postdoctoral researcher at Dartmouth College. “The empirical work that they did, I think, is really outstanding.”

A Feature or a Bug?

But why are memories recalled in this “blurrier” way? To find out, the researchers created a model of the visual cortex that had different levels of neurons with receptive fields of increasing size. They then simulated an evoked memory by sending a signal through the levels in reverse order. As in the brain scans, the spatial blurriness seen in the level with the largest receptive field persisted through all the rest. That suggests that the remembered image forms in this way due to the hierarchical nature of the visual system, Favila said.

One theory about why the visual system is arranged hierarchically is that it helps with object recognition. If receptive fields were tiny, the brain would need to integrate more information to make sense of what was in view; that could make it hard to recognize something big like the Eiffel Tower, Favila said. The “blurrier” memory image might be the “consequence of having a system that’s been optimized for things like object recognition.”

But it’s not clear “whether it’s a feature or a bug,” said Thomas Naselaris, an associate professor at the University of Minnesota. He was not involved in the new study, but he came to a similar conclusion that perception and memory look very different in the brain in a 2020 study. He favors the idea that the difference is advantageous, perhaps in helping to differentiate perceptions from memories. “A person whose mental imagery had all of the detail and precision of their scene imagery could get confused easily,” he said.

The blurriness could also help to prevent storage of unnecessary information. Maybe the important thing isn’t to remember where each pixel sits in the field of vision, but that the pixels belong to a family member or a friend, Favila said.

“It’s not like the visual system is incapable of generating highly detailed, vivid and precise imagery,” Naselaris said. People have reported very vivid visual images, for example, when they’re in the “hypnogogic” state between sleep and wakefulness. The brain “just tends not to do it during waking hours.”

Favila and her team are hoping to explore whether similar processing happens with other aspects of a visual memory, such as shapes or colors. They are especially eager to examine how these differences in perception and memory guide behaviors.

Perception and memory “are different; our experience of them is different, and pinning down exactly the ways in which they’re different will be important to understanding how memory is expressed,” Favila said. The differences were “lurking in the data the whole time.”