How to Guarantee the Safety of Autonomous Vehicles

Señor Salme for Quanta Magazine

Introduction

Driverless cars and planes are no longer the stuff of the future. In the city of San Francisco alone, two taxi companies have collectively logged 8 million miles of autonomous driving through August 2023. And more than 850,000 autonomous aerial vehicles, or drones, are registered in the United States — not counting those owned by the military.

But there are legitimate concerns about safety. For example, in a 10-month period that ended in May 2022, the National Highway Traffic Safety Administration reported nearly 400 crashes involving automobiles using some form of autonomous control. Six people died as a result of these accidents, and five were seriously injured.

The usual way of addressing this issue — sometimes called “testing by exhaustion” — involves testing these systems until you’re satisfied they’re safe. But you can never be sure that this process will uncover all potential flaws. “People carry out tests until they’ve exhausted their resources and patience,” said Sayan Mitra, a computer scientist at the University of Illinois, Urbana-Champaign. Testing alone, however, cannot provide guarantees.

Mitra and his colleagues can. His team has managed to prove the safety of lane-tracking capabilities for cars and landing systems for autonomous aircraft. Their strategy is now being used to help land drones on aircraft carriers, and Boeing plans to test it on an experimental aircraft this year. “Their method of providing end-to-end safety guarantees is very important,” said Corina Pasareanu, a research scientist at Carnegie Mellon University and NASA’s Ames Research Center.

Their work involves guaranteeing the results of the machine-learning algorithms that are used to inform autonomous vehicles. At a high level, many autonomous vehicles have two components: a perceptual system and a control system. The perception system tells you, for instance, how far your car is from the center of the lane, or what direction a plane is heading in and what its angle is with respect to the horizon. The system operates by feeding raw data from cameras and other sensory tools to machine learning algorithms based on neural networks, which re-create the environment outside the vehicle.

These assessments are then sent to a separate system, the control module, which decides what to do. If there’s an upcoming obstacle, for instance, it decides whether to apply the brakes or steer around it. According to Luca Carlone, an associate professor at the Massachusetts Institute of Technology, while the control module relies on well-established technology, “it is making decisions based on the perception results, and there’s no guarantee that those results are correct.”

To provide a safety guarantee, Mitra’s team worked on ensuring the reliability of the vehicle’s perception system. They first assumed that it’s possible to guarantee safety when a perfect rendering of the outside world is available. They then determined how much error the perception system introduces into its re-creation of the vehicle’s surroundings.

The key to this strategy is to quantify the uncertainties involved, known as the error band — or the “known unknowns,” as Mitra put it. That calculation comes from what he and his team call a perception contract. In software engineering, a contract is a commitment that, for a given input to a computer program, the output will fall within a specified range. Figuring out this range isn’t easy. How accurate are the car’s sensors? How much fog, rain or solar glare can a drone tolerate? But if you can keep the vehicle within a specified range of uncertainty, and if the determination of that range is sufficiently accurate, Mitra’s team proved that you can ensure its safety.

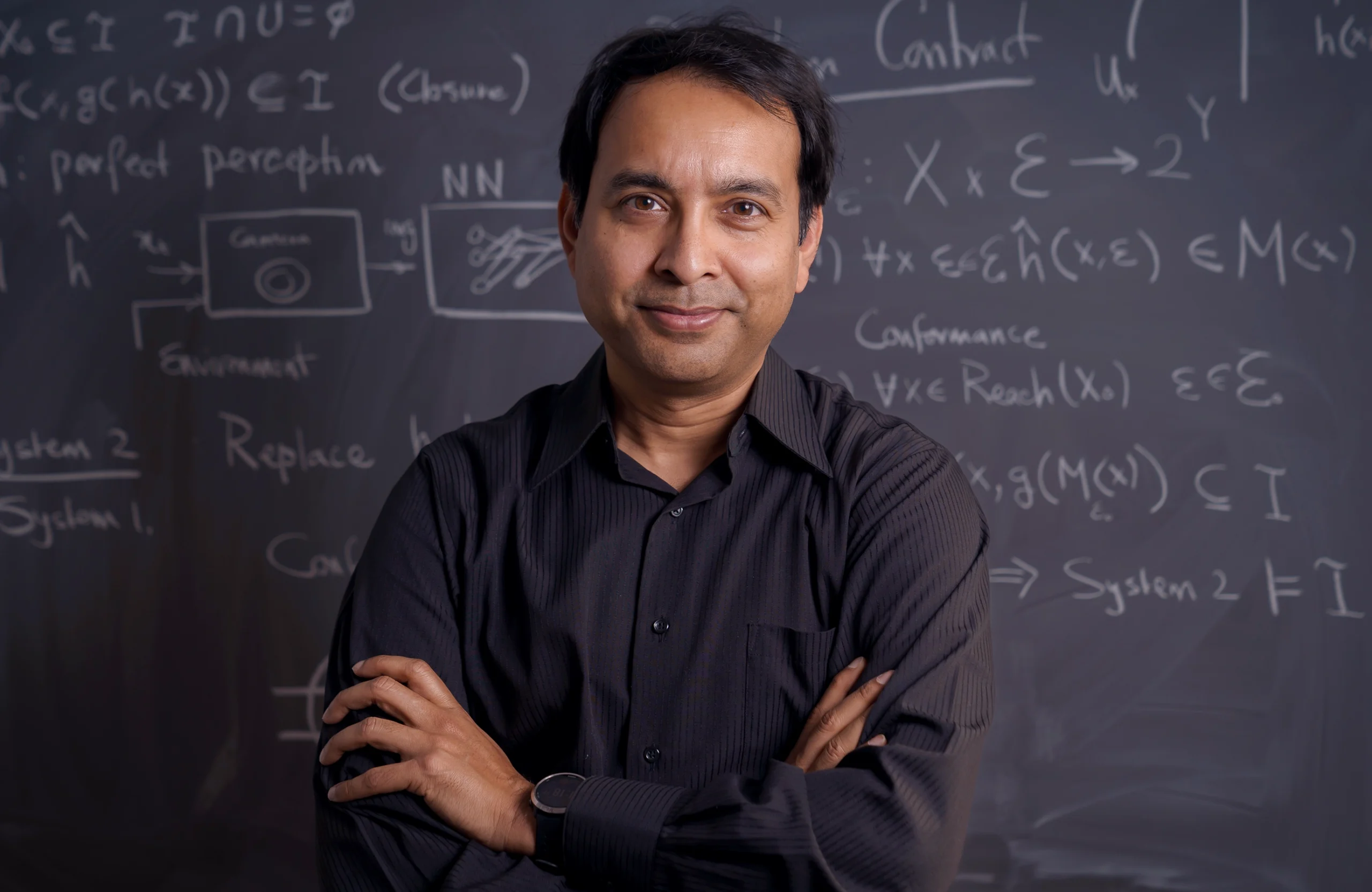

Sayan Mitra, a computer scientist at the University of Illinois, Urbana-Champaign, has helped develop a systematic approach for guaranteeing the safety of certain autonomous systems.

Virgil Ward II

It’s a familiar situation for anyone with an imprecise speedometer. If you know the device is never off by more than 5 miles per hour, you can still avoid speeding by always staying 5 mph below the speed limit (as indicated by your untrustworthy speedometer). A perception contract affords a similar guarantee of the safety of an imperfect system that depends on machine learning.

“You don’t need perfect perception,” Carlone said. “You just want it to be good enough so as not to put safety at risk.” The team’s biggest contributions, he said, are “introducing the entire idea of perception contracts” and providing the methods for constructing them. They did this by drawing on techniques from the branch of computer science called formal verification, which provides a mathematical way of confirming that the behavior of a system satisfies a set of requirements.

“Even though we don’t know exactly how the neural network does what it does,” Mitra said, they showed that it’s still possible to prove numerically that the uncertainty of a neural network’s output lies within certain bounds. And, if that’s the case, then the system will be safe. “We can then provide a statistical guarantee as to whether (and to what degree) a given neural network will actually meet those bounds.”

The aerospace company Sierra Nevada is currently testing these safety guarantees while landing a drone on an aircraft carrier. This problem is in some ways more complicated than driving cars because of the extra dimension involved in flying. “In landing, there are two main tasks,” said Dragos Margineantu, AI chief technologist at Boeing, “aligning the plane with the runway and making sure the runway is free of obstacles. Our work with Sayan involves getting guarantees for those two functions.”

“Simulations using Sayan’s algorithm show that the alignment [of an airplane prior to landing] does improve,” he said. The next step, planned for later this year, is to employ these systems while actually landing a Boeing experimental airplane. One of the biggest challenges, Margineantu noted, will be figuring out what we don’t know — “determining the uncertainty in our estimates” — and seeing how that affects safety. “Most errors happen when we do things that we think we know — and it turns out that we don’t.”